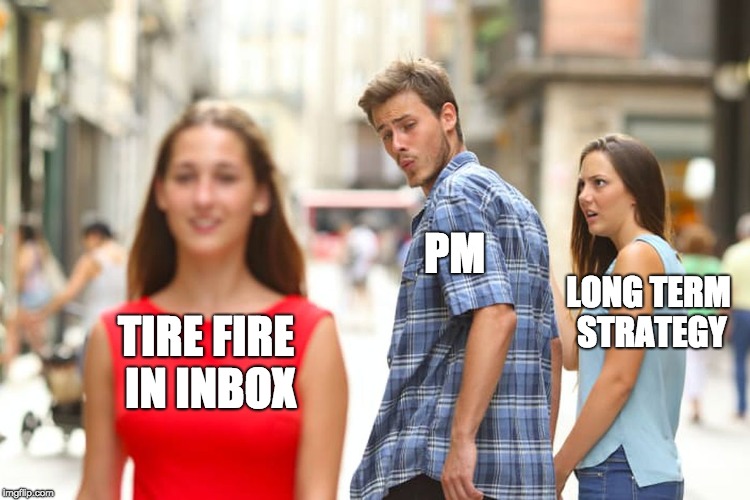

This is part of a short series about the talk I gave at Product Camp Boston, entitled “Getting Better at Getting Better: A PM Kaizen.” A general introduction to the talk is here and you can view the slides.

The number one complaint I hear from PMs is the tyranny of the calendar. “There’s no time.” “I’m in meetings all day, all week.” “I’m staying until 8 to get work done.” “I’m logging in after the kids are in bed and staying up until 2.” “Let’s meet, two weeks from now when I have free time.”

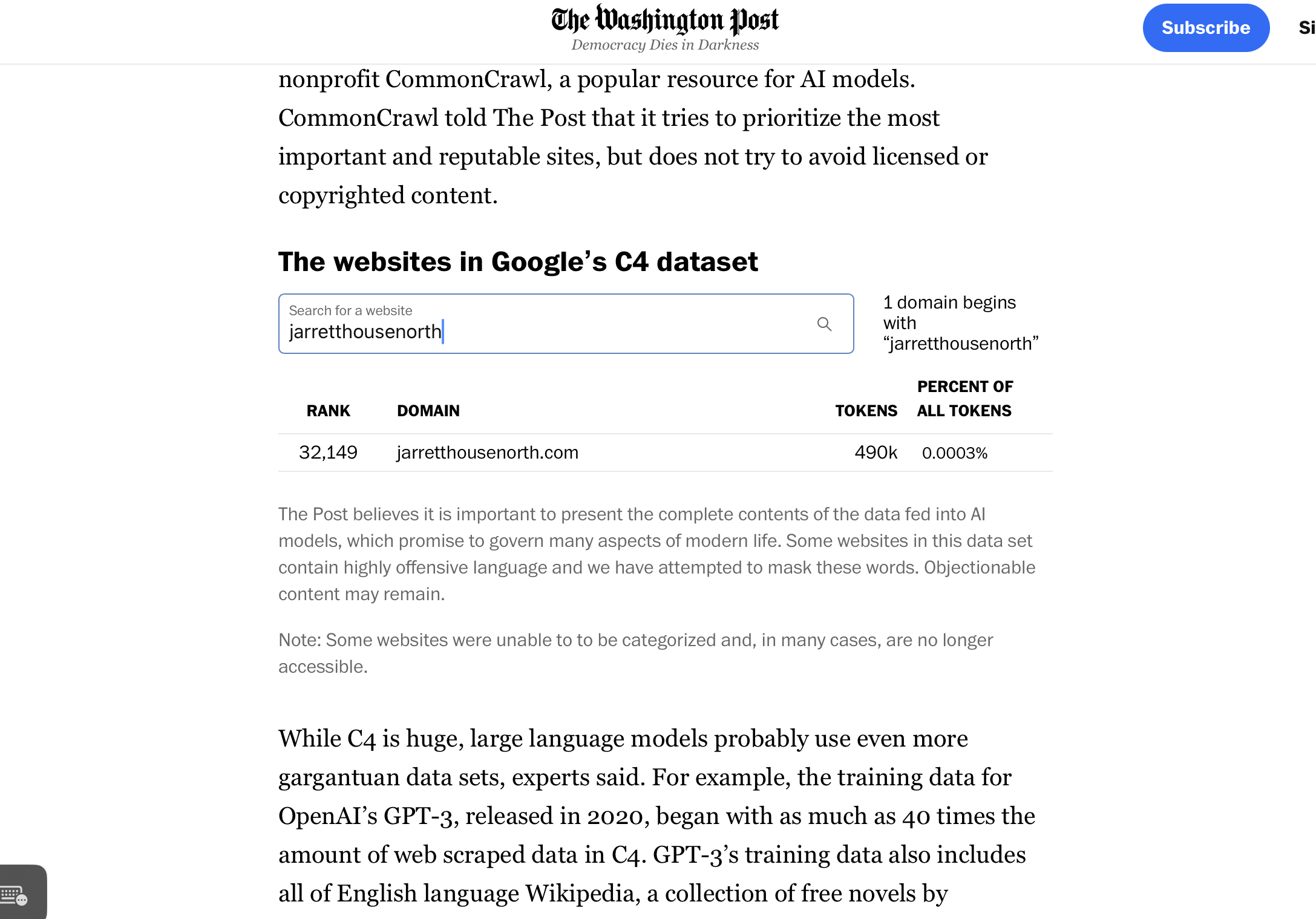

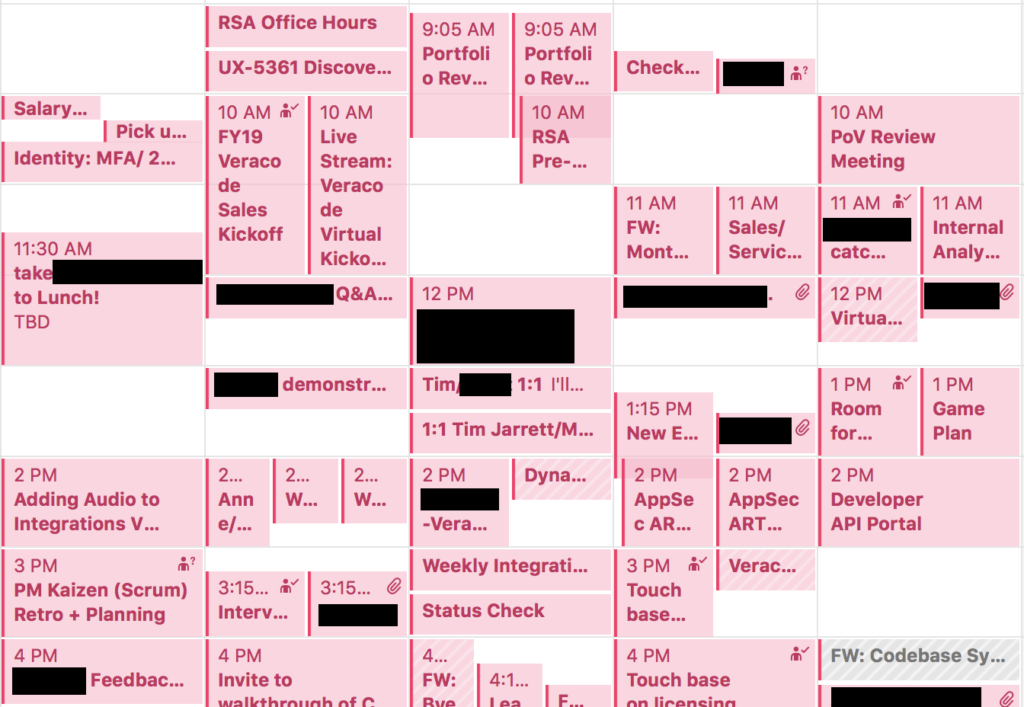

The net result is a calendar like the above, with about three or four free hours scattered throughout a work week. There are all kinds of problems with this way of working. One of the worst problems is something I recognized first in working with building software for developers, and that I recognize in my own calendar: the time to build context.

Knowledge workers, like software developers and product managers (and marketers and others), rely on an understanding of the context in which they’re doing their work to be effective. As a software developer, you can’t effectively debug a problem unless you first build a mental model of how that part of the software works. That’s time-consuming, and if you’re interrupted you have to start all over again. (There’s a great illustration of this challenge for programmers by Jason Heeris.) And for some PM work—strategy, building roadmap, understanding user problems—you need that mental model time too. A half hour or hour here or there doesn’t really cut it.

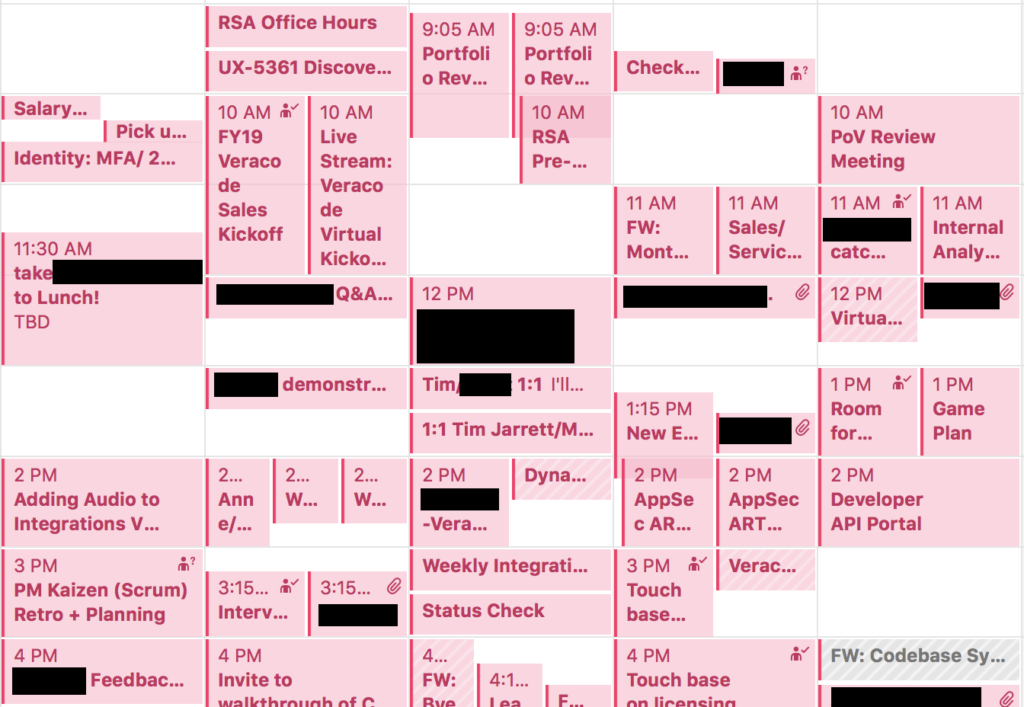

But many PMs that I know are achievement oriented. We like to make lists and check off items. So what do we do? We spend all our downtime getting stuff done. It isn’t the strategic important stuff that needs a half hour of context building, because we don’t have time for that. It’s responding to email, putting out fires in inboxes, answering customer feedback.

The strategic, in other words, gets crowded out by the tactical.

There are many ways you can solve for this problem. One of them, which I heartily recommend, is becoming more effective at saying “no.” That has its own challenges. You can just say “no” and leave the requester with no way to fill that request. Unless you’re uninterested in the welfare of your customers and the bottom line of your company, that’s often not behavior that maximizes long-term outcomes. So you may find yourself trying to understand the breakdown in the system that led that person to your desk and left you as the only person in the organization that can help them solve their problem, and suddenly you’re back to square one.

If you’re good at saying no when you can, and diagnosing organizational breakdowns when you can’t AND taking steps to shift the permanent solution elsewhere in the organization, then that’s an effective way to keep your calendar clean. That means, though, that “just saying no” isn’t really within reach for most PMs.

So what’s the alternative? I would argue that we have to find a way to systematically think about our work, in such a way that we don’t constantly have to reconstruct our context before we can move the work to the next step. I’ll discuss this more next time.

Follow up reading: The challenge of being “the only person in the organization that can help solve the problem” is covered extensively in the writings of W. Edwards Deming; he calls the process of finding these “only people” identifying the constraint in a process, and recommends that you find ways to elevate the constraint by redesigning the process so that it is subordinate to the constraint. There’s some practical discussion about elevating constraints in the context of software development and IT in the classic DevOps novel The Phoenix Project.