The Congressional report on the OPM breach is available. More than 200 pages of non-searchable PDF. Set aside some time for a little light reading.

Category: Security

Instant replay: Apple’s 2016 Black Hat presentation

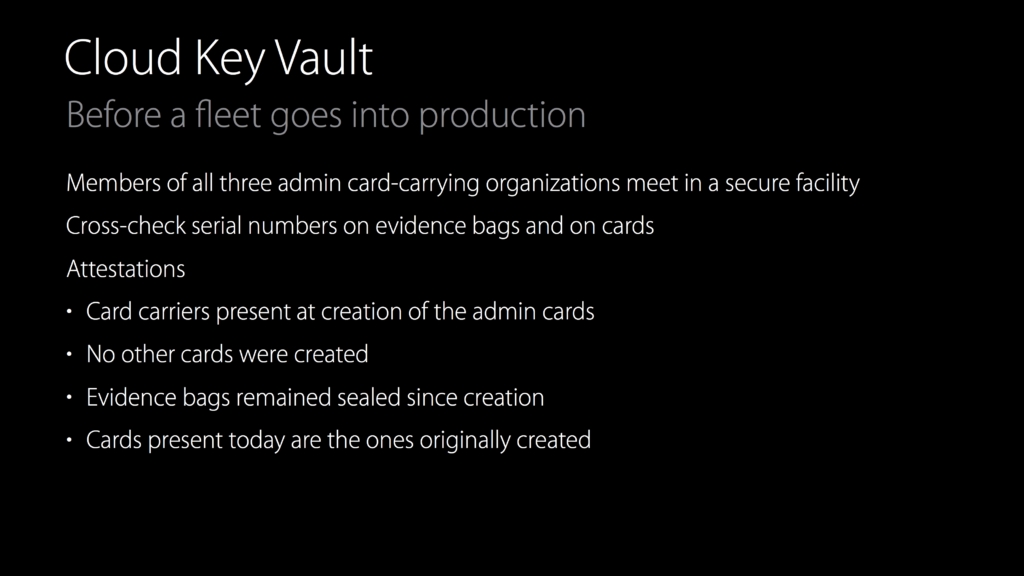

MacStories: Apple’s Presentation at Black Hat Now Available to Watch in Full. For those like myself for whom the stories were tantalizing teasers, the presentation is now available on YouTube. I love the illustration of the “physical one-way hash function.”

BlackHat 2016: roundup of iOS security

A few interesting presentations last week at BlackHat dealt with iOS security. The most interesting was Ivan Krstić’s presentation taking us “Behind the Scenes with iOS Security.” Krstić, Apple’s head of security engineering and architecture, reviewed the implementation of features like Keychain Backup, file encryption, sharing of credit card information across devices, etc.

I particularly enjoyed the description of how the cloud-based key vaults for iCloud are protected:

Don’t lose the keys: Microsoft and Windows Secure Boot

AppleInsider: Oops: Microsoft leaks its Golden Key, unlocking Windows Secure Boot and exposing the danger of backdoors. Interesting happening following the Apple/FBI standoff over iPhone encryption. If a secret key exists, the odds are very good that it will fall into the hands of an unintended recipient. See also: technical explanation and disclosure of the hack.

Veracode Hackathon IX

It’s the semiannual Veracode hackathon, so I’m behind on blogging. Again.

It’s that most wonderful time of the year—no, that other one. My company Veracode is hosting its ninth Hackathon this week, and it’s been interesting. The theme is 90s Internet Hackers, or as we say in my house, “Saturday.” Seriously: putting together the radio station was just a matter of looking in my iTunes library, and my programming skills aren’t too much more current than the 1990s. (Applescript, anyone?)

Between the bake sale, the people doing caffeine hacking at a table in the cafe, the puzzle hunt, and everything else, it’s … interesting around here.

What’s at stake in the FBI iPhone case? Your privacy and safety.

NPR: Encryption, Privacy Are Larger Issues Than Fighting Terrorism, Clarke Says. With all due respect to Richard Clarke, who sits on the board of my employer and who has been on the right side of arguments about cybersecurity for about 20 years: of course they are. Of course, the correction should probably be aimed at NPR’s Writer of Breathless Headlines.

As I’ve written before, it’s ironic that a federal government that can’t secure its own systems is presuming to dictate terms of secure computer design. What explains it is a continued reliance on magical thinking: a supposition that, if we try hard enough, we can overcome any barrier. In this case, the barrier is the ability to offer a secret backdoor to law enforcement in an encryption technology without endangering all other users of that encryption technology. Sadly, President Obama appears to subscribe to this magical thinking:

If, technologically, it is possible to make an impenetrable device or system where the encryption is so strong that there’s no key – there’s no door at all – then how do we apprehend the child pornographer? How do we solve or disrupt a terrorist plot?

The whole point of cryptography that works is that there’s no door at all for unauthorized users. If you put one in, you have to put the key somewhere, and you open yourself up to having it stolen, or having someone figure out how to get in. And if you ask for a special version of an operating system that can unlock a locked iPhone, you end up with software that can be applied without restriction to every locked phone, by the government, by the next 100 world governments that ask for access to it, and by whoever manages to breach federal computers and steal the software for their own use.

This would be a fun theoretical exercise, as it mostly was back in the days of the Clipper Chip debates, were it not for the vast businesses that are built on secure commerce, protected by cryptography; the lives of dissidents in totalitarian countries who seek to protect their speech and thoughts with cryptography; the national secrets that are protected by cryptography; the electronic assets of device users everywhere that are protected from criminals by cryptography. But because of all those things, to propose to compel a computer manufacturer to embed a back door system—or worse, to turn over their intellectual property to the government so that they can add such a feature.

And Clarke’s analysis says that the last thing is what this is all about: bringing technology companies to heel by setting a precedent that they must do whatever the government asks, no matter how much it endangers users of their products. Read this exchange:

GREENE: So if you were still inside the government right now as a counterterrorism official, could you have seen yourself being more sympathetic with the FBI in doing everything for you that it can to crack this case?

CLARKE: No, David. If I were in the job now, I would have simply told the FBI to call Fort Meade, the headquarters of the National Security Agency, and NSA would have solved this problem for them. They’re not as interested in solving the problem as they are in getting a legal precedent.

If Clarke, who helped to shape the government’s response to the danger of cyberattacks, says that the NSA could have hacked this phone for the FBI, I believe him. This is all about making Apple subordinate to the whims of the FBI. The establishment of the right of the government to read your mail above all rights to privacy is only the latest step in a series of anti-terrorism overreactions that brought us such developments in security theater as the War on Liquids. Beware of anyone telling you otherwise.

The ironic battle over crypto

Bruce Schneier: Security vs. Surveillance. As the dust finally settles from the breach of the US Office of Personnel Management, in which personal information for 21.5 million Americans who were Federal employees or who had applied for security clearances with the government was stolen, I find it unbelievable that other parts of the federal government are calling for weakening cryptographic protections.

Because that’s what the call for law enforcement backdoors is. There’s a certain kind of magical thinking in law enforcement and politics that says we should be able to have things both ways—encrypt data to keep it safe from bad guys while letting us in. It doesn’t work that way. If the crypto algorithm has a secret key, it will be found. Or stolen, if OPM tells us anything about the state of security in the federal government.

I’d like a presidential candidate who calls for stronger, not weaker, encryption, and who starts by demanding it of federal software systems.

Eight years in

Today is the eighth anniversary of my first day at Veracode. It’s something I don’t talk as much about here, primarily because it keeps me so busy that I can’t write here very much. But it’s interesting to step back and understand how much things have changed—and how much they haven’t.

Here’s one of the first things I wrote about Veracode, a few days after I started. What hasn’t changed is the fallacy of trying to stop exploitation of application layer vulnerabilities by going after the network, or as Chris Wysopal said, “doubling the number of neighborhood cops without repairing the broken locks that are on everyone’s front doors.”

What has changed? Well, we were a tiny, scrappy little company when we started. But we just picked up senior sales and marketing leadership with pedigrees from RSA and Sophos, and we’re a lot bigger than we were eight years ago. It’s a fun day to be at Veracode, realizing just how rapidly we’ve grown.

On the legality of peeping Toms

Boing Boing: Free Stanford course on surveillance law. Now I know what I’ll be doing in my spare time this month, and you should too.

At last month’s inaugural Black Hat Executive Summit, I learned a few things that surprised me about how existing US law applies to “cyber,” and I expect to continue to be surprised by this course. Probably unpleasantly, but who knows?

In which I look a gift horse in the mouth

Springer has published a bunch of its books online for free. (Hundreds more were free until this morning but the plug has been pulled.) I went looking to see what I could find. There are some interesting finds there, including a festschrift for Ted Nelson, the inventor of hypertext. And, relevant to my work interests, a text called The Infosec Handbook.

What’s that, you say? A free textbook on information security? Sign me up! Well, not so fast, pilgrim.

Admittedly, I come to the topic of information security with a very narrow perspective—a pretty tight focus on application security. But within that topic I think I’ve earned the right to cast a jaundiced eye on new offerings, as I’m going to celebrate my eighth year at Veracode next month. And I’m a little disappointed in this aspect of the book.

Why? Simple answer: it’s not practical. The authors (Umesh Hodeghatta Rao and Umesha Nayak) spend an entire chapter discussing various classes of threats, trying to provide a theoretical framework for application security considerations, and discussing in the most general terms the importance of a secure development lifecycle. But the SDLC discussion includes exactly one mention of testing, to wit, in the writeup of Figure 6-2: “Have strong testing.” And an accompanying note: “Similarly, testing should not only focus on what is expected to be done by the application but also what it should not do.”

Really?

It’s pretty widely understood in the industry that “focus(ing) on what is expected” and “[focusing] on what [the application] should not do” are two completely different skill sets and that even telling a functional tester what to look for does not ensure that they can find security vulnerabilities. The problem has been well known for so long that we’re nine years into the lifespan of the definitive work on the subject, Wysopal et al’s The Art of Application Security Testing. But there’s no acknowledgment of any of the challenges raised by that book, including most notably the need to deploy automated security testing to ensure that vulnerabilities aren’t lurking in the software.

As for the “eight characteristics” that supposedly ensure that an application is secure, take a look at the list for yourself:

- Completeness of the Inputs

- Correctness of the Inputs

- Completeness of Processing

- Correctness of Processing

- Completeness of the Updates

- Correctness of the Updates

- Maintenance of the Integrity of the Data in Storage

- Maintenance of the Integrity of the Data in Transmission

Really? Nothing about availability. Nothing about authorization (determining whether a user should be allowed to access information or execute functionality). Nothing about guarding against unintended leakage of application metadata, such as errors, identifying information, or implementation details that an attacker could use. And nothing about ensuring that a developer didn’t include malicious or unintended functionality.

The chapter also includes no mention of technologies that can be deployed against application attacks, though this may be a blessing in disguise given the poor track record of web application firewalls and the nascent state of runtime application self-protection technology.

All in all, if this is what passes for “state of the art” in a security curriculum from the second biggest textbook publisher in the world, I’m sort of relieved that information security isn’t a required curriculum in a lot of CS programs. It might be better to learn about application security in particular from a source like OWASP, SANS, or your favorite blog than to read a text as shallow as this.

Ten year lookback: the Trustworthy Computing memo

On the Veracode blog (where I now post from time to time), we had a retrospective on the Microsoft Trustworthy Computing memo, which had its ten year anniversary on the 15th. The retrospective spanned two posts and I’m quoted in the second:

On January 15, 2002, I was in business school and had just accepted a job offer from Microsoft. At the time it was a very different company–hip deep in the fallout from the antitrust suit and the consent decree; having just launched Windows XP; figuring out where it was going on the web (remember Passport)? And the taking of a deep breath that the Trustworthy Computing memo signaled was the biggest sign that things were different at Microsoft.

And yet not. It’s important to remember that a big part of the context of TWC was the launch of .NET and the services around it (remember Passport)? Microsoft was positioning Passport (fka Hailstorm) as the solution for the Privacy component of their Availability, Security, Privacy triad, so TWC was at least partly a positioning memo for that new technology. And it’s pretty clear that they hadn’t thought through all the implications of the stance they were taking: witness BillG’s declaration that “Visual Studio .NET is the first multi-language tool that is optimized for the creation of secure code”. While .NET may have eliminated or mitigated the security issues related to memory management that Microsoft was drowning in at the time, it didn’t do anything fundamentally different with respect to web vulnerabilities like cross-site scripting or SQL injection.

But there was one thing about the TWC memo that was different and new and that did signal a significant shift at Microsoft: Gates’ assertion that “when we face a choice between adding features and resolving security issues, we need to choose security.” As an emerging product manager, that was an important principle for me to absorb–security needs to be considered as a requirement alongside user facing features and needs to be prioritized accordingly. It’s a lesson that the rest of the industry is still learning.

To which I’ll add: it’s interesting what I blogged about this at the time and what I didn’t. As an independent developer I was very suspicious of Hailstorm (later Passport.NET) but hadn’t thought that much about its security implications.

The market failure of application security

Part 1 of an as yet indeterminate number of posts about why application security has historically been broken, and what to do about it.

Software runs everything that is valuable for companies or governments. Software is written by companies and purchased by companies to solve business problems. Companies depend on customer data and intellectual property that software manages, stores, and safeguards.

Companies and governments are under attack. Competitors and foreign powers seek access to sensitive data. Criminals seek to access customer records for phishing purposes or for simple theft. Online vigilante groups express displeasure with companies’ actions by seeking to expose or embarrass the company via weaknesses in public facing applications.

Software is vulnerable. All software has bugs, and some bugs introduce weaknesses in the software that an attacker can use to impact the confidentiality, integrity, or availability of software and the data it safeguards.

Market failure

The resources required to fix software vulnerabilities are in contention with other development priorities, including user features, functional bugs, and industry compliance requirements. Because software vulnerabilities are less directly visible to customers than the other items, fixing them gets a lower priority from the application’s business owner so fixing them comes last. As a result, most software suppliers produce insecure software.

Historically, software buyers have not considered security as a purchase criterion for software. Analyst firms including Gartner do not discuss application security when covering software firms and their products. Software vendors do not have a market incentive to create secure software or advertise the security quality of their applications. And software buyers have no leverage to get security fixes from a vendor once they have purchased the software. The marketplace is not currently acting to correct this information asymmetry; this is a classic market failure, specifically a moral hazard failure, in which the buyer does not have any information about the level of risk in the product they are purchasing.

So the challenge for those who would make software more secure is how to create a new dynamic, one in which software becomes more secure over time rather than less. We’ll talk about some ideas that have been tried, without much success, tomorrow.

Risky (software) business

I got interviewed last week for Risky Business, a podcast about application security from Australia. Had a great time chatting with Patrick Gray about security during the application development lifecycle. You can download the episode at their site or listen to it here:

[audio:http://media.risky.biz/RB158.mp3]Doing secure development in an Agile world

My software development lead and I are doing a webinar next week on how you do secure development within the Agile software development methodology (press release). To make the discussion more interesting, we aren’t talking in theoretical terms; we’ll be talking about what my company, Veracode, actually does during its secure development lifecycle.

No surprise: there’s a lot more to secure development in any methodology than simply “not writing bad code.” Some of the topics we’ll be including are:

- Secure architecture — and how to secure your architecture if it isn’t already

- Writing secure requirements, and security requirements, and how the two are different.

- Threat modeling for fun and profit

- Verification through QA automation

- Static binary testing, or how, when, and why Veracode eats its own dogfood

- Checking up–internal and independent pen testing

- Education–the role of certification and verification

- Oops–the threat landscape just changed. Now what?

- The not-so-agile process of integrating third party code.

It’ll be a brisk but fun stroll through how the world’s first SaaS-based application security firm does business. If you’re a developer or just work with one, it’ll be worth a listen.

Next week: Austin, TX

You’ll be able to catch me in my professional capability twice next week. I’ll be giving a talk on Tuesday in Austin, TX to the Austin chapter of ISACA (the Information Systems Audit and Control Association) on “Best Practices for Application Risk Management.” The argument: the current frontier in securing sensitive data and systems isn’t the network, it’s the applications securing the data. But just as it’s hard to write secure code, even with conventional testing tools, it’s even harder to get a handle on the risk in code you didn’t write. And, of course, it’s the rare application these days that is 100% code that you wrote. I’ll talk about ways that large and small enterprises can get their arms around the application security challenge.

I’ll also be joining one of our customers to talk in more depth about a key part of Veracode’s application risk management capability, our developer elearning program and platform, in a webinar. If you are interested in learning how to improve application security before the application even gets written, this is a good one to check out.